Have you ever wanted to make your own programming language? Maybe a template engine? A JSON parser? If you have ever built any of those, you might have noticed it’s not exactly easy to get started. There are a lot of concepts to digest before you get going. That’s why lots of devs just give up. We’d like to help with that.

At Beezwax, a few years ago we built a WordPress plugin which allows users to upload their blog posts to the Apple News platform. In order to do this, we had to translate HTML to some particular format. What we wrote is, at its core, a compiler. Compilers are not only for programming languages, they are in many more places than you might think!

This series of blog posts will show you how to make a compiler from scratch. The techniques displayed here will not only help you write compilers, but will give you the tools to solve a whole type of similar problems which – in the programming world – happen quite frequently.

What exactly is a compiler, anyways?

Let’s start from the beginning and define what a compiler is. A compiler is just a black box which translates input in a given language to output in another language. The input and output languages can be anything. If you’ve been in the Javascript world for the past few years you might have seen something called transpiler. A transpiler is actually a compiler, it transforms, for example, Coffeescript source code into Javascript source code or SASS into CSS.

NOTE Compilers can’t take any language as input. With these techniques, you cannot write an english-to-machine-code compiler. But for simple languages, we can. Once we get into parsing we’ll learn more about those kind of languages, for now, just know that every programming language you know can be an input language for a compiler.

What we’ll build

To keep things simple, I decided to make a simple compiler which translates a tiny subset of markdown to HTML. Here’s an example:

As you can see, we put markdown in, and get back HTML. For the implementation language, I’ve chosen Ruby, a language we love at Beezwax because of its focus on readability and programmer happiness. As I want to focus on concepts rather than a fully-optimized implementation, I think Ruby is the best fit for these tutorials.

You’ll learn about tokenization, parsing, and code-generation. Because I’ll talk about compilers, I won’t get into things like interpreters or optimizations. I just want to give the reader a solid base, so they can get a taste of this whole subject, and pursue their own more specific interests if they happen to like it.

Some of the things you might want to do afterwards include making your own:

- Programming language

- Virtual machine

- Template engine

- Scripting language

- DSL

- JSON parser

- Syntax checker

- Synax highlighter

- Smart code renaming

- Smart autocomplete…

- ..and more. The sky is the limit!

Overview of our compiler

Our compiler will mimic the most common compiler structure out there, and we’ll boil it down to the very core of it. Our compiler will consist of three steps. The first step is transforming the input markdown string into a list of tokens.

A token is just a name for the basic building blocks of our language. For example an underscore, an asterisk, a new line, or just some words. This will make things easier for us later on.

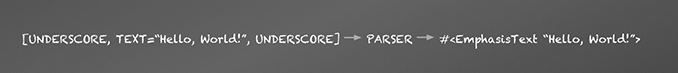

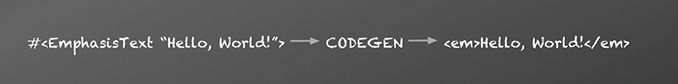

Next, we take those tokens and pass them into a parser. That parser will give us a tree data-structure representing our tokens organized in certain way.

Overall, the process looks like this:

You might think this is all quite complicated, but it’s actually the most standard way of writing compilers. With this structure, we not only divide the problem into smaller chunks so it’s easier to reason about and test, we can easily swap some parts around, for example, change the code generator to emit, for example, RTF documents instead of HTML documents. We could also write a new Tokenizer and Parser for a different language, and as long as the returned Abstract Syntax Tree is in the same format, we can still generate proper HTML.

The Tokenizer

Let’s start implementing! The first step in our compiler process is tokenizing – also called Lexical Analisys. Tokenizing is basically making sense of a bunch of characters by transforming them into Tokens. For example: Hello_ could be transformed to [<TEXT=HELLO>, <UNDERSCORE>], an array of plain old Ruby objects.

Because we want to recognize just a part of markdown, let’s start with some examples of the things we will match:

As we are only going to match paragraphs, emphasized text and bold text — no links, lists, quotes, etc — it makes sense to have only the following tokens: UNDERSCORE; STAR; NEWLINE; TEXT and EOF.

So, for example, for the input _Hello* our tokenizer should return [<UNDERSCORE>, <TEXT="Hello">, <STAR>].

Let’s start with a test which defines what our Tokenizer should do. We’ll use Minitest for the specs.

The full source code for the compiler lives in GitHub; we encourage you to clone and play with it. The snippets displayed here won’t give you the whole picture of this particular compiler, they instead focus on explaining concepts so you can write your own.

There are numerous ways to write tokenizers. Each one is different, tailored to specific needs. In this series I’ll use a rather simple, object-oriented approach with emphasis on readability and simplicity.

We’ll start by building a Tokenizer object, which will take a markdown input string and return a list of Token objects that have type and value attributes.

We’ll then use some Scanner objects to find tokens. Basically, we’ll register scanners that each match specific tokens. Then we run the text through all the scanners and collect what they return. We’ll stop when something could not be matched or everything has been consumed.

The method of interest here is scan_one_token. It takes a plain markdown string and returns a single token, matching the first character of the input string. To do so, it iterates though the scanners, and if the token matched is not null — i.e., if it’s valid — it will return that token. Otherwise, it will keep trying scanners. We fail if we consume the whole array and return nothing.

The tokens_as_array method is a wrapper for our previous method. It’s a recursive function which calls scan_one_token until there’s no more string to send, or the scan_one_token method raises an error. This method also appends an end-of-file token, which will be used to mark the end of the token list.

The TokenList class itself is just a convenient wrapper around a collection, so there’s not much point showing it here. Same for Token — it’s just a data object with two attributes, type and value.

What’s now left to show you are the scanners. Here’s the first one, which matches single characters — can’t get simpler than this!

As you can see, all the work is performed in the from_string method. All scanners must implement this method. The method takes a plain markdown string as input and returns a single token, using some logic to determine whether it should match it or not. When matched, it returns a valid token. Otherwise, it returns a “null token”. Note that a token knows when it’s invalid — in this case when either the type or the value are empty — that’s the InvalidTokenError we are catching.

NOTE Null objects are an object-oriented pattern which is used to get rid

of unwantedifstatements and avoid possible nil reference errors. If you’ve never

heard of this before, you might want to check out this other blog post

Now onto the other scanner, TextScanner. This one is a bit more complicated but still quite simple:

We take advantage of Ruby functional-style list processing to fetch as many valid characters from the string as we can. We consider a character valid when it’s not matched by the SimpleScanner.

And that’s the gist of the Tokenizer! If you want to play around with it you should just git clone git@github.com:beezwax/markdown-compiler.git and play with it with your favorite editor.

Try running the tests with rake test test/test_tokenizer.rb and adding new characters to be recognized, like (, ), [, and ].

You did it!

If you’ve followed along, congrats! You’ve taken the first step towards writing a compiler. For now, you can relax, pat yourself on the back and sip some coffee. Next time, we’ll talk about Parsing. We’ll learn about Grammars, Formal Languages and Abstract Syntax Trees. Don’t worry — they are not as scary as they sound.

Here are Part 2, Parsing/Implementation and Part 3, Code Generation.

4 thoughts on “Writing a Markdown Compiler – Part 1”